3 Do-it-yourself

Most of the methods presented in this book are available in both R and Python and can be used in a uniform way. But each of these languages has also many other tools for Explanatory Model Analysis.

In this book, we introduce various methods for instance-level and dataset-level exploration and explanation of predictive models. In each chapter, there is a section with code snippets for R and Python that shows how to use a particular method.

3.1 Do-it-yourself with R

In this section, we provide a short description of the steps that are needed to set-up the R environment with the required libraries.

3.1.1 What to install?

Obviously, the R software (R Core Team 2018) is needed. It is always a good idea to use the newest version. At least R in version 3.6 is recommended. It can be downloaded from the CRAN website https://cran.r-project.org/.

A good editor makes working with R much easier. There are plenty of choices, but, especially for beginners, consider the RStudio editor, an open-source and enterprise-ready tool for R. It can be downloaded from https://www.rstudio.com/.

Once R and the editor are available, the required packages should be installed.

The most important one is the DALEX package in version 1.0 or newer. It is the entry point to solutions introduced in this book. The package can be installed by executing the following command from the R command line:

Installation of DALEX will automatically take care about installation of other requirements (packages required by it), like the ggplot2 package for data visualization, or ingredients and iBreakDown with specific methods for model exploration.

3.1.2 How to work with DALEX?

To conduct model exploration with DALEX, first, a model has to be created. Then the model has got to be prepared for exploration.

There are many packages in R that can be used to construct a model. Some packages are algorithm-specific, like randomForest for random forest classification and regression models (Liaw and Wiener 2002), gbm for generalized boosted regression models (Ridgeway 2017), rms with extensions for generalized linear models (Harrell Jr 2018), and many others. There are also packages that can be used for constructing models with different algorithms; these include the h2o package (LeDell et al. 2019), caret (Kuhn 2008) and its successor parsnip (Kuhn and Vaughan 2019), a very powerful and extensible framework mlr (Bischl et al. 2016), or keras that is a wrapper to Python library with the same name (Allaire and Chollet 2019).

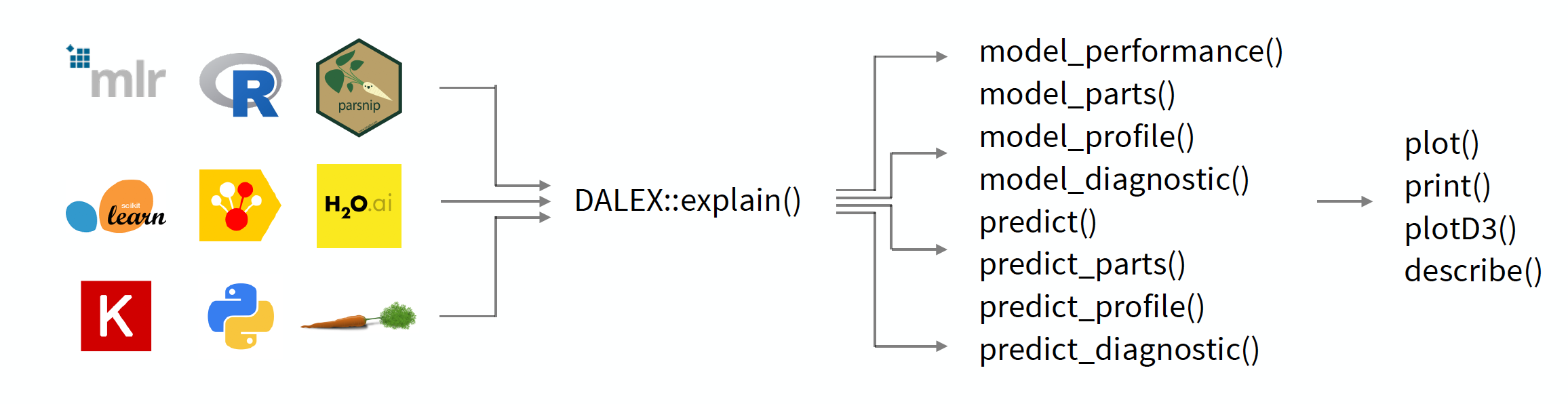

While it is great to have such a large choice of tools for constructing models, the disadvantage is that different packages have different interfaces and different arguments. Moreover, model-objects created with different packages may have different internal structures. The main goal of the DALEX package is to create a level of abstraction around a model that makes it easier to explore and explain the model. Figure 3.1 illustrates the contents of the package. In particular, function DALEX::explain is THE function for model wrapping. There is only one argument that is required by the function; it is model, which is used to specify the model-object with the fitted form of the model. However, the function allows additional arguments that extend its functionalities. They are discussed in Section 4.2.6.

Figure 3.1: The DALEX package creates a layer of abstraction around models, allowing you to work with different models in a uniform way. The key function is the explain() function, which wraps any model into a uniform interface. Then other functions from the DALEX package can be applied to the resulting object to explore the model.

3.1.3 How to work with archivist?

As we will focus on the exploration of predictive models, we prefer not to waste space nor time on replication of the code necessary for model development. This is where the archivist packages help.

The archivist package (Biecek and Kosinski 2017) is designed to store, share, and manage R objects. We will use it to easily access R objects for pre-constructed models and pre-calculated explainers. To install the package, the following command should be executed in the R command line:

Once the package has been installed, function aread() can be used to retrieve R objects from any remote repository. For this book, we use a GitHub repository models hosted at https://github.com/pbiecek/models. For instance, to download a model with the md5 hash ceb40, the following command has to be executed:

Since the md5 hash ceb40 uniquely defines the model, referring to the repository object results in using exactly the same model and the same explanations. Thus, in the subsequent chapters, pre-constructed models will be accessed with archivist hooks. In the following sections, we will also use archivist hooks when referring to datasets.

3.2 Do-it-yourself with Python

In this section, we provide a short description of steps that are needed to set-up the Python environment with the required libraries.

3.2.1 What to install?

The Python interpreter (Rossum and Drake 2009) is needed. It is always a good idea to use the newest version. Python in version 3.6 is the minimum recommendation. It can be downloaded from the Python website https://python.org/. A popular environment for a simple Python installation and configuration is Anaconda, which can be downloaded from website https://www.anaconda.com/.

There are many editors available for Python that allow editing the code in a convenient way. In the data science community a very popular solution is Jupyter Notebook. It is a web application that allows creating and sharing documents that contain live code, visualizations, and descriptions. Jupyter Notebook can be installed from the website https://jupyter.org/.

Once Python and the editor are available, the required libraries should be installed. The most important one is the dalex library, currently in version 0.2.0. The library can be installed with pip by executing the following instruction from the command line:

pip install dalexInstallation of dalex will automatically take care of other required libraries.

3.2.2 How to work with dalex?

There are many libraries in Python that can be used to construct a predictive model. Among the most popular ones are algorithm-specific libraries like catboost (Dorogush, Ershov, and Gulin 2018), xgboost (Chen and Guestrin 2016), and keras (Gulli and Pal 2017), or libraries with multiple ML algorithms like scikit-learn (Pedregosa et al. 2011).

While it is great to have such a large choice of tools for constructing models, the disadvantage is that different libraries have different interfaces and different arguments. Moreover, model-objects created with different library may have different internal structures. The main goal of the dalex library is to create a level of abstraction around a model that makes it easier to explore and explain the model.

Constructor Explainer() is THE method for model wrapping. There is only one argument that is required by the function; it is model, which is used to specify the model-object with the fitted form of the model. However, the function also takes additional arguments that extend its functionalities. They are discussed in Section 4.3.6. If these additional arguments are not provided by the user, the dalex library will try to extract them from the model. It is a good idea to specify them directly to avoid surprises.

As soon as the model is wrapped by using the Explainer() function, all further functionalities can be performed on the resulting object. They will be presented in subsequent chapters in subsections Code snippets for Python.

3.2.3 Code snippets for Python

A detailed description of model exploration will be presented in the next chapters. In general, however, the way of working with the dalex library can be described in the following steps:

- Import the

dalexlibrary.

- Create an

Explainerobject. This serves as a wrapper around the model.

- Calculate predictions for the model.

- Calculate specific explanations.

- Print calculated explanations.

- Plot calculated explanations.

References

Allaire, JJ, and François Chollet. 2019. keras: R Interface to Keras. https://CRAN.R-project.org/package=keras.

Biecek, Przemyslaw, and Marcin Kosinski. 2017. “archivist: An R Package for Managing, Recording and Restoring Data Analysis Results.” Journal of Statistical Software 82 (11): 1–28. https://doi.org/10.18637/jss.v082.i11.

Bischl, Bernd, Michel Lang, Lars Kotthoff, Julia Schiffner, Jakob Richter, Erich Studerus, Giuseppe Casalicchio, and Zachary M. Jones. 2016. “mlr: Machine Learning in R.” Journal of Machine Learning Research 17 (170): 1–5. http://jmlr.org/papers/v17/15-066.html.

Chen, Tianqi, and Carlos Guestrin. 2016. “XGBoost: A Scalable Tree Boosting System.” In Proceedings of the 22nd Acm Sigkdd International Conference on Knowledge Discovery and Data Mining, 785–94. KDD ’16. ACM. https://doi.org/10.1145/2939672.2939785.

Dorogush, Anna Veronika, Vasily Ershov, and Andrey Gulin. 2018. “CatBoost: gradient boosting with categorical features support.” CoRR abs/1810.11363. http://arxiv.org/abs/1810.11363.

Gulli, Antonio, and Sujit Pal. 2017. Deep Learning with Keras. Birmingham, UK: Packt Publishing Ltd.

Harrell Jr, Frank E. 2018. Rms: Regression Modeling Strategies. https://CRAN.R-project.org/package=rms.

Kuhn, Max. 2008. “Building Predictive Models in R Using the Caret Package.” Journal of Statistical Software 28 (5): 1–26. https://doi.org/10.18637/jss.v028.i05.

Kuhn, Max, and Davis Vaughan. 2019. Parsnip: A Common Api to Modeling and Analysis Functions. https://CRAN.R-project.org/package=parsnip.

LeDell, Erin, Navdeep Gill, Spencer Aiello, Anqi Fu, Arno Candel, Cliff Click, Tom Kraljevic, et al. 2019. H2o: R Interface for H2O. https://CRAN.R-project.org/package=h2o.

Liaw, Andy, and Matthew Wiener. 2002. “Classification and regression by randomForest.” R News 2 (3): 18–22. http://CRAN.R-project.org/doc/Rnews/.

Pedregosa, F., G. Varoquaux, A. Gramfort, V. Michel, B. Thirion, O. Grisel, M. Blondel, et al. 2011. “Scikit-Learn: Machine Learning in Python.” Journal of Machine Learning Research 12: 2825–30.

R Core Team. 2018. R: A Language and Environment for Statistical Computing. Vienna, Austria: R Foundation for Statistical Computing. https://www.R-project.org/.

Ridgeway, Greg. 2017. Gbm: Generalized Boosted Regression Models. https://CRAN.R-project.org/package=gbm.

Rossum, Guido van, and Fred L. Drake. 2009. Python 3 Reference Manual. Scotts Valley, CA: CreateSpace.